Este artículo examina la tensión entre reduccionismo y emergentismo en la ciencia. El reduccionismo busca leyes fundamentales y una teoría unificada, mientras que el emergentismo destaca la aparición de propiedades nuevas en niveles superiores de complejidad. A través de ejemplos de la física de la materia condensada y la superconductividad, se sostiene que ambas visiones son complementarias en el avance del conocimiento.

Emergence and Reductionism: A brief overview

1. Introduction

Sciences in general, and perhaps physics among all sciences the most, has reached a challenging crossroad. The scientific research community is divided into those who believe there soon will be a “theory of everything”, which will bring scientific inquiry to an end, and those who feel (and wish) that the systematic and rigorous scientific inquiry carries itself the process of creation of “new scientific knowledge”, in an endless succession of learn-to-discover like process[1].

The former group, the reductionists, believes that in principle everything follows from a set of fundamental laws, which once encoded conveniently in explicit equations will provide not only a full understanding of Nature, but also a powerful predictive tool for everything. However, said that, they convey with a sense of dismay that due either to the complicated structure of the resulting equations or to the overwhelmingly large dimension of the entities to be calculated, in practice, it is unfeasible to carry out the calculation in full, and consequently one has to rest on some approximations, reasonable assumptions, intuitive models, and even occasionally on phenomenological arguments borrowed from less accurate laws.

The latter group, the emergentists, contends that there exists plenty of phenomena which are not accessible from fundamental laws involving only the properties of the constituent elementary component parts of the systems under investigation. Additionally, they also claim that it is in the approximations and models where insight, and therefore the predictive power relies. Thus, for this group, it is not a pity that approximations, assumptions, etc., have to be made. Indeed, it is real science at its full power, the only way at hand to properly address Nature’s understanding.

2. Reductionism versus Emergentism

In philosophical terms, it is convenient to distinguish between three types of reductionism. The first is methodological or constituent reductionism, which we all share, whereby a complex system is divided into smaller subsystems in order to study it better. The second is conceptual or epistemological reductionism, according to which the properties of one level can be derived straightforwardly from the ‘laws’ of the lower lying levels; this constructionist hypothesis has been proven wrong in many cases where the emergence of new phenomena cannot be derived directly from a full understanding of the lower levels. The third type of reductionism, which is the strongest, is causal or ontological reductionism. We could simply describe it with the idea that one level is ‘nothing more’ than the lower one, and so on, down to elementary particles; the whole is nothing more than the sum of its parts. The reality is ontologically reduced to the simplest level.

Condensed matter physics teaches us that moving from one level of organization to a higher one is not possible simply by operating with the laws and concepts of the lower level. It requires new ideas, new principles that are exclusively characteristic of the higher level. This shows us that epistemological and, of course, ontological reductionism cannot work, not even in principle, when there are many interacting bodies[2].

Nevertheless, the reduction of a higher level (complex) system often in conveniently assisted by breaking down in smaller fundamental “units”, which are not necessarily simply the constituents of its basal (lower) level. They may be, and normally are, exclusive new arrangement modes nor envisioned neither derived from the properties of lower level constituents, but from the “new laws” which characterize the higher level’s behavior. In condensed matter physics, it is very rare to be able to predict the entire behavior of a material starting from its fundamental constituents. It is often the experiment that reveals new modes of arrangement and unexpected behaviors not directly linked to the properties of the constituents of its basal (lower) level.

In a nutshell, understanding phenomena arising at a higher levels is facilitated by reducing them to a collection of simpler independent systems, but the specific structure of these subsystems depends on the specific properties of the higher level we want to analyze.

This introduces ‘democracy’ among the various branches of physics and among all sciences, since they all appear as equally fundamental. This is in direct contradiction with the claim of ontological or conceptual reductionism that ‘ontologically reduces’ reality to the lowest level. Instead it brings to the fore the a kind of ontological pluralism by attributing physical reality to each level of complexity and, as a consequence, autonomy to each area of the physical sciences.

In the past, physics has been highly reductionist, analyzing nature in terms of its increasingly smaller constituents and revealing their fundamental unifying laws. Unification and reductionism have dominated fundamental theoretical physics for much of the last century. The former characterizes the hope of providing a unified description of physical phenomena. The latter is the aspiration to minimize, without constraints, the number of independent concepts needed to formulate fundamental laws.

The idea of searching for the most elementary constituents of Nature has been deeply rooted. When the poet William Blake needed to summarize all of science in one line, he spoke of “Democritus' atoms and Newton's particles of light”. From the Greece of Democritus and Leucippus to Blake's time and our own, as Steven Weinberg reminds us, the idea of the fundamental particle has been the symbol of science's deepest goal: to understand the complexity of nature in simple terms.

Einstein always defended a unifying vision coupled with a radical form of reductionism: ‘The supreme test of a physicist is to arrive at those elementary universal laws from which the cosmos can be constructed by pure deduction.’

Reductionism is a way of studying nature that has brought spectacular improvements to humanity and continues to be for many the of central paradigm of physics [3].

The theory of everything is the ultimate goal of reductionism. Equations capable of describing everything. With that, science would be over. As John Horgan provocatively announced in his book, The End of Science: Facing the Limits of Knowledge in the Twilight of the Scientific Age, “The equation of everything would bring about the end of science”.

Bloom says that "no poet can hope to approach, much less surpass, the perfection of his predecessors (Shakespeare, Dante, etc.), and therefore modern poets are essentially tragic, late figures. Modern scientists are also latecomers, and their tragedy is even greater than that of the poets. Scientists should not surpass Shakespeare's realm, but rather Newton's laws of motion, Darwin's theory of natural selection and Einstein's general theory of relativity”.

For some, the theory of everything would mean the end of science. All that would remain for scientists would be to refine and apply the brilliant discoveries of their predecessors. Science as an intellectual adventure would be over. Is this really the case? Let us consider the case of condensed matter. For much of this field, the equation of everything already exists, yet it tells us almost nothing interesting about anything of interest.

To simplify, we can say that the material world, condensed matter, is governed by a single physical law: Coulomb's law. As we will recall, this law tells us that electric charges attract or repel each other with a force proportional to the product of the charges and inversely proportional to the square of the distance. Coulomb's law and Pauli's exclusion principle are the pillars on which condensed matter and life itself are built.

Indeed, for the chemistry and physics of condensed matter, the Schrödinger equation of non-relativistic quantum mechanics, with Coulomb's law as the fundamental interaction, describes practically the entire world of human beings. This is what Dirac proclaimed in 1929: ‘The underlying physical laws necessary for the mathematical theory of a large part of physics and the whole of chemistry are thus completely known, and the difficulty is only that the exact application of these laws leads to equations much too complicated to be soluble. It therefore becomes desirable that approximate practical methods of applying quantum mechanics should be developed, which can lead to an explanation of the main features of complex atomic systems without too much computation.’

The “Theory of Everything” contained in Schrödinger's equation is not a theory of everything. We know that the equation is correct because it has been solved with great precision for quite a few systems of small number of particles (isolated atoms and small molecules) and the results have shown great agreement with experiment. However, it cannot be solved exactly even for a system composed of only two electrons in the field of clamped positive charge; namely, the hydrogen anion H(-). Naturally, solving the Schrödinger's equation for systems with more than two electrons is hopeless.

This is not something that can be solved in the future with the advent of much faster computers. If N is the amount of computational memory needed to represent the quantum wave function of one particle, the amount of memory needed to represent the wave function of k electrons is Nk. Condensed matter physics, surface physics, and nanotechnology are full of situations whose understanding, i.e., our ability to predict what will happen in an experiment, degrades if we divide the system into parts. Due to the overwhelmingly large dimensionality of the Hilbert space, the quantitative change from the microscopic to the macroscopic level becomes qualitative. A new physics emerges whenever the “symmetry of the underlying laws is broken” (See Appendix 1).

Of course, as Laughlin and Pines[4] pointed out, it is possible to make approximate calculations for large systems, and it is precisely thanks to these calculations that we have understood why atoms are the size they are, when and why they form chemical bonds, why solids have the properties they have, why some things are transparent while others reflect or absorb light. With a little more experimental information (and this is conceptually decisive), it is even possible to predict the atomistic configuration of small molecules, the rate of certain chemical reactions, structural phase transitions, ferromagnetism and even, in some cases, transition temperatures in superconductors. But the schemes for making approximations are not deductions from first principles but rather an art linked to experimentation and, therefore, tend to be less reliable precisely when their reliability is most needed: that is, when experimental information is scarce, physical behavior is unprecedented and key features have not yet been identified.

The vast majority of scientists accept, without question, the epsitemologic reductionist hypothesis. It consists of assuming that the ultimate functioning of all matter, animate and inanimate, is determined by a set of fundamental laws, laws that we know well, except when pushed into the so-called “singularities” like for instance, infinite densities of matter or charge, infinite temperatures, etc.[5]

The danger, however, is to take the reductionist attitude to extremes and claim, in the field of experimental science, that all physics and chemistry can be reduced to understanding the behavior of elementary particles. Similarly, this would imply that all biology and medicine consist of understanding the functioning of DNA, and conclude that the rest would just be “cooking”. This is a mistaken point of view, and it leads to pernicious extrapolations to various fields of social sciences.

The constructionist hypothesis[6] fails when confronted with the difficulties of scale and complexity. In a famous article, ‘More is different,’ Phil Anderson[7] states that "The reductionist hypothesis does not imply a “constructionist” one. The ability to reduce everything to simple fundamental laws does not imply the ability to start from those laws and reconstruct the universe. The behavior of large aggregates of “elementary” particles cannot be understood as a simple extrapolation of the properties of a few particles.”

The key to understand these emergent properties is what is called “broken symmetry”: laws have a simplicity and a symmetry that is not manifested in the consequences of those laws. When trillions of atoms come into close contact, forming a complex system, new types of ‘laws’ appear. Quantity becomes quality. A huge number of atoms (of the order of Avogrado’s number, 1023) can do many things that a single atom cannot. New qualities appear. Understanding these new qualities is the goal of solid-state physics.

Our ordinary environment provides us with the simplest and most important laboratory for studying what have been called emergent properties, those properties of objects, including ourselves, that are not contained in our microscopic description: life and consciousness, of course, but also very simple properties such as rigidity, superfluidity, ferromagnetism, and superconductivity. Let us consider the latter one in detail.

A canonical example of emergence is the phenomenon of superconductivity, i.e.: the expulsion of the magnetic field from inside the material and the absence of electrical resistance (and therefore energy dissipation) originally exhibited by some materials at very low temperatures, close to absolute zero temperature (-273 degrees Centigrade). Superconductivity does not present any new laws. However, the explanation for this emergent “new quality” had to wait almost fifty years after its discovery in 1911 at Leiden by Dutch physicist Kamerling Onnes, until the American physicist J. Bardeen, L.N. Cooper and J.R. Schrieffer published[8] the quantum theory of this phenomenon in 1957. This is the BCS theory of superconductivity, whose acronym is composed of the initials of its three discoverers. It took 46 years to understand superconductivity, even though the physical mechanisms of electron movement in metals were discovered between 1926 and 1932 by Bloch, Peierls and other pioneers of quantum physics.

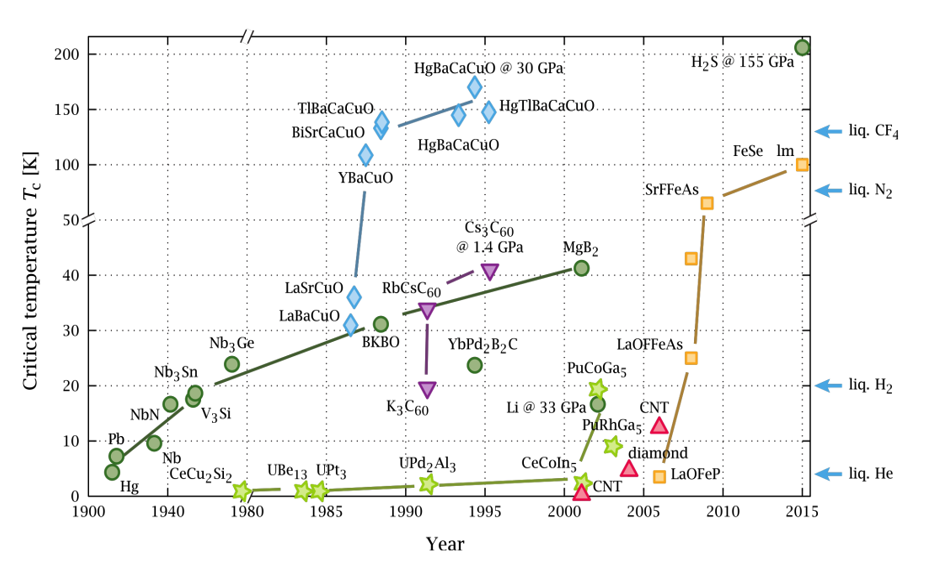

Superconductivity is destroyed when the temperature, external magnetic field or electric current density exceed certain critical values. The critical temperature of virtually all superconducting materials known before 1986 is in the order of a few degrees Kelvin, the temperature of liquid helium, which is very expensive to obtain, limiting and hindering its large-scale technological application. Raising the critical temperature has been one of the most important problems in 20th-century physics. The record for these traditional superconductors was set by John Gavaler of the Westinghouse Laboratory in 1973, in thin sheets of germanium and niobium, with critical temperatures of 23.2 K. Milestones in the history of classical superconductivity technology include the discovery in 1961 of superconductors with high critical fields and currents and the manufacture of multifilamentous conductors (1967-1970). In 1986, Bednorz and Muller[9] discovered so-called high-temperature superconductors in rare earth oxides. Subsequently, a critical temperature of 40 K was obtained[10] in MgB2, and in 2015, superconductivity was observed at 200 K in SH2 at a pressure of 155 gigapascals[11]. Ion Errea et al.[12] have shown that, similar to what happens in phases of water at high pressures, quantum fluctuations of the proton change the phase diagram of SH3, which is the pathway for superconductivity in SH2.

The explanation for this absence of friction in the movement of electrons in traditional superconductors is based on a peculiarity of the interaction between two electrons within a crystal. Since all electrons have a negative charge, we would normally expect repulsion between any pair of these elementary particles. At low temperatures, however, this repulsion is more than compensated for by an attractive force that appears between the electrons as follows: an electron interacts with the network of positive metal ions, attracting it and thus causing a small local deformation of the crystal. This deformation produces a small region of net positive charge, which can attract a second electron if it has the opposite spin to the first electron.

The two electrons therefore remain bound together through the mediation of the positive ionic lattice, and their movement is limited as if they were bound by an elastic spring. These pairs of electrons are called Cooper pairs. The resistivity of a metal in its normal state appears as a result of electron collisions, both with static imperfections such as impurities and with dynamic deformations such as lattice vibrations, phonons. If an electron in a Cooper pair encounters an imperfection and its movement is distorted, this is quickly compensated by the restoring force of the other electron in the pair. This surprising situation only persists if the available amount of thermal energy is insufficient to overcome the binding energy between the two electrons.

The BCS theory shows that superconductivity can be understood as the result of the association of electrons into pairs mediated by their coupling to phonons. Condensation causes the quantum nature of the pairs to manifest itself as a variable phase that varies gradually over macroscopic dimensions. Macroscopic quantities such as current density can be described as a function of this phase.

Today, however, we do not understand the origin of high-temperature superconductivity in cuprates and Fe compounds. Superconductivity in these materials does not fit the explanation described above.

In other superconductors, superconductivity is mediated by electron-phonon interaction. This is also the case in hydrogen sulfide, although in this case the phonons are strongly non-harmonic.

Figure 1: Evolution of critical temperature in superconducting materials over time.

Figure 1 shows the time evolution of the critical temperature of various superconductors since the discovery of superconductivity in mercury (Hg) until 2015.

3. Superconductivity is an emergent property

Understanding how the complexity arises from the simplicity of a few fundamental laws is a fascinating task. The fascination stems from the emergence of properties, new properties that are were not present in the basal material substrate of the complex system. The new properties turn out congruent but, for now, not deducible from those of its constituents. For the mind or for the computer, the physics of the ‘hardware’ does not matter much. When we observe natural phenomena, we do not see the physical laws but the consequences of these laws. Highly complex asymmetrical structures surge from very symmetrical laws. What matters is seeing the emergence of new properties: Seeing how the whole is much more than the sum of its parts.

Perhaps this is one of the philosophical keys to the science of our century: what we observe emerges from more elementary and symmetrically ordered substrates. Nonetheless, the emergents ought to be consistent with the physical laws of the lower levels, but cannot be conceptually deduced from them. Molecular biology does not violate the laws of chemistry, but it contains ideas that cannot be directly deduced from those laws (See Appendix 2).

Throughout this century, we have learned that knowledge is structured on many levels, practically decoupled from each other although each one is consistent with the previous one. There is an intellectual autonomy of the more complex levels with respect to the substrates of which they are composed. At each level, new concepts appear that are not calculable and often are unimaginable by simply looking at the lower levels. The rigidity of a stone has no equivalent at the atomic scale. Organisms have properties that do not even have meaning at the cellular level.

The study of new behaviors at each level of complexity requires research that is ‘as fundamental in nature as any other’. The elementary entities of condensed matter physics obey the laws of particle physics, but condensed matter is not ‘applied particle physics’, nor is chemistry applied many-body physics, nor the mind is the brain.

Chemistry is much more than applied physics.[13] And when we talk about chemistry, we know very well what we are talking about. Certainly not the quark but the idea of the chemical bond allows us to understand much of chemistry without requiring us to delve deeper and deeper into the microscopic details that give rise to it. This topic has been analyzed by Schweber[14]. His conclusion is that, philosophically, the laws of chemical bonding break the chain of reductionism and make it irrelevant for higher complex levels of organization to delve into deeper underlying levels’ laws, despite of claims that we would have to add the electroweak forces with their violation of parity to electromagnetism as the essential basis of chemistry.[15] Chemistry with its already well established chemical bonding theory[16], has contributed in an essential way to the progress of humanity (the fight against hunger, the advances medicine, etc.). Chemistry is reducible to physics — provided we forget almost all of chemistry!

In short, in Anderson's words: “ … there are many more levels, there is much more cognitive distance from ethics to DNA than from DNA to elementary particles”.

Each level, decoupled from the previous ones, has “its own fundamental laws” and its set of ‘quasiparticles’, using the usual terminology in many-body physics. But it is not enough to know the fundamental laws of each level. It is the solution of the equations, and not the equation itself, that provides a description of physical phenomena. Emergence refers to the properties of the solutions. Dyson reminds us[17] that to understand space-time, Einstein's equations of general relativity are not enough; it is also necessary to find the unexpected consequences of the solutions to the equations.

The behavior of large aggregates of “elementary” particles cannot be understood ‘as a simple extrapolation of the properties of a few particles’. Although there may be indications of how to relate one level to another, it is almost impossible to deduce the complexity and novelty that arises upon aggregation. This does not invalidate the usefulness of techniques and ideas from one field in another; we have clear examples in both directions.

An essential task of theoretical physics in the future will not be so much to write the “ultimate equation”, as to understand emergent behavior various manifestations, including, in due time, the emergence of life itself. We will continue to cherish the precious values of reductionism, but we will delve deeper and deeper into the emergence that arises from complexities of all kinds.

The process of ‘emergence’ is the key to much of the structure of 21st-century science. Let me venture a prediction. Emergence, not Lederman's divine particle or the ‘dream of a final theory,’ will dominate the future. We must expand the symbol of the poet Blake, reclaiming the fundamental nature of our understanding of complexity, precisely from the same goal, to understand the complex in simple terms, in terms that can and must be different at each level.

Many of us see the world not as a hierarchy in which all knowledge derives from divine equations, but as a hierarchy structured in levels separated by degrees of emergence, each intellectually disconnected from the substrate. The value of reductionism lies in intellectually unifying the various sciences and by removing redundancies, incongruent concepts and false assumptions, but not as the programme that will explain reality to us completely.

4. Conclusion: Reductionism and Emergentism

Emergence and reductionism are not opposites but complementary[18]. When we are able, at the appropriate level, to reduce emergence, we embed it in a vast web of connections that strengthen it conceptually. This is Anderson's view, with which I agree; therefore, it seems appropriate to end to conclude with Anderson's words[19], written a few years later than his pioneering article “More is different’” was published in the journal Science[20]. He wrote: “When one succeeds in finding a reductionist explanation for a given phenomenon, one embeds it into the entire web of internally consistent scientific knowledge; and it becomes much harder to modify any feature of it without “tearing the web”.”

In short, the two views, reductionism and emergentism, complement each other and are not enemies. We need reductionism with emergence and emergence with reductionism.

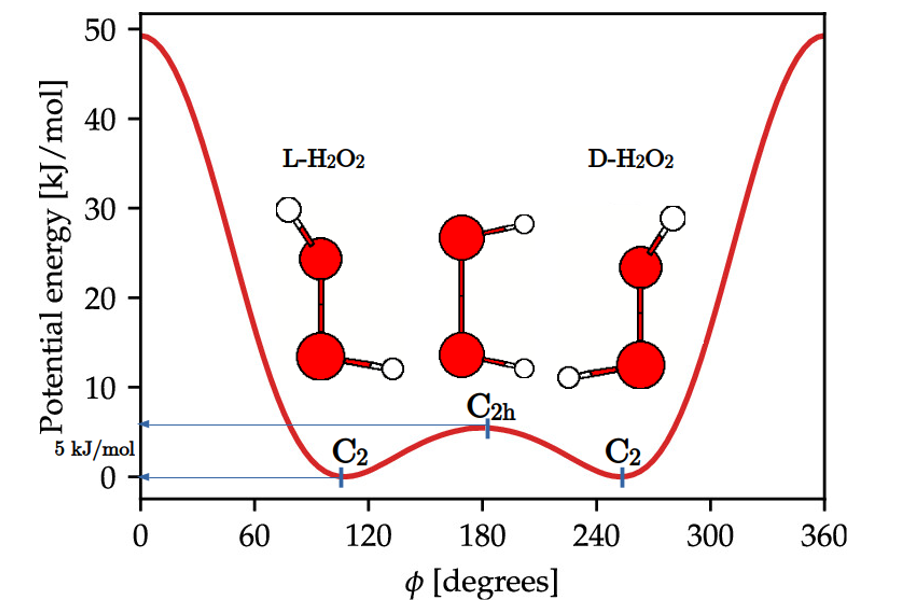

| Appendix 1 Let us digress a moment and talk about what symmetry breaking means. Consider hydrogen peroxide, H2O2. The molecule is not planar, the H-O-O-H backbone is known to make a dihedral angle ϕ=111.5 degrees and consequently yields a C2 point group molecular structure, which lacks a center of inversion. The planar structure C2h like structure is a transition state separating the L and D enantiomers by an energy barrier of ~5 kJ/mol, as shown in Figure A1. Namely, at this transition state the inversion symmetry is broken and the molecular spontaneously chooses one of the two L or D symmetry-broken states. Let us digress a moment and talk about what symmetry breaking means. Consider hydrogen peroxide, H2O2. The molecule is not planar, the H-O-O-H backbone is known to make a dihedral angle ϕ=111.5 degrees and consequently yields a C2 point group molecular structure, which lacks a center of inversion. The planar structure C2h like structure is a transition state separating the L and D enantiomers by an energy barrier of ~5 kJ/mol, as shown in Figure A1. Namely, at this transition state the inversion symmetry is broken and the molecular spontaneously chooses one of the two L or D symmetry-broken states.

However, the Hamiltonian operator for both L and D enantiomers is the same, because it depends solely on the internuclear “relative” distances, which are equal for both enantiomers. Consequently, the Schrödinger equation must yield two energy degenerate solutions, ΨL and ΨD, and in accordance with Bohr’s superposition principle, the quantum mechanical description would correspond to the coherent superpositions (ΨL ± ΨD), (“+”) the ground state and (“‒”) for the excited state , respectively. The reality is that we have two ground states, ΨL and ΨD which tunnel from one to the other with high frequency, of the order of 1012 times per second at room temperature, due to the small transition energy barrier. Larger molecules possessing heavier atoms that hydrogen cannot tunnel in days, even years. This is why we can bottle, for instance, L-alanine, ΨL, D-alanine, ΨD, or even the racemate (mixture of L- and D-alanines) separately, but we cannot bottle the (ΨL ± ΨD) coherent superpositions. The symmetry is broken and its restoration time results to be large enough as to make the symmetry-broken states stable. This is the essence of the symmetry-broken phenomena that Anderson applied to continuous symmetry systems. Thus, Anderson explained that antiferromagnetism is a new form of symmetry breaking, in which the fundamental state is not even an eigenstate of the Hamiltonian, unlike ferromagnetism and similar to the case of superconductivity. In both, the ferro and antiferro cases, the ground state breaks the full symmetry of the Hamiltonian and the characteristic Goldstone modes (spin waves) arise, i.e.: massless excitations as a direct general consequence of all spontaneous break of continuous symmetries. Additionally, he also predicted that a massless boson accompanies a broken continuous symmetry, for the symmetry is restored by the zero point vibrational modes, which therefore have to have a divergent amplitude, i.e.: zero energy. He wrote the effective relevant Hamiltonian and estimated that the time needed for the restoration was of the order of years. This article, said Anderson himself: "contains the seed for all my later ideas about symmetry breaking and Goldstone's modes, and the relationship between microscopic and mesoscopic physics ". |

| Appendix 2 The basal chemical component of molecular biology is the “genome”, a set ot forty-six χ-shaped chromosomes, each one consisting of a number selected DNA fragments conveniently fused. Each cell of living organisms contains its own copy of the genome. The genome encompasses genetic information, and decoding such information consists in deciphering the molecular messages encoded by each of the chromosome’s. This is way the genome is often called the “book of the cell”. The reductionistic approach claims that such an information suffices for a skillful chemist to make (synthesize) the organism in the laboratory. However, this is incautious to say the least at the time being. Living organisms contains plenty of molecules that are not encoded in the genome. There is no genome for lipids, neither for instruct them to fold the particular way the do to former cell membranes. There is not genome for bones, neither for neural signaling,etc. The emergentism contents that there is no way to make the organism with the sole information carried by the genome. Ball puts it in plain English[21]: “The genome is the book of the cell in much the same way the dictionary is the book of performance of Waiting for Godot. It is all there, but you will not ded. |

[1] Echenique, P.M. (2017), Dinámica de iones y electrones en sólidos y superficies y pequeñas pinceladas sobre ciencia (Discurso de Entrada RAC), Capitulo 8, paginas 65-75, RAC). This article has been adapted by the editors from Pedro Miguel Echenique's inaugural address to the Real Academia de Ciencias Exactas. Físicas y Naturales with permission from the author.

[2] M. Sunjic, "Critical comments on reductionism in physical sciences"' Metanexus Conference "Cosmos, Nature and Culture: A Transdisciplinary Conference" Phoenix, Arizona (2009).

[3] S. Weinberg, Dreams of a Final Theory, New York, Pantheon (1992).

[4] R.B. Laughlin, D. Pines, Proc. Natl. Acad. Sci. 27, 1, 28 (2000).

[5] It is worth noting that it may be fallacious to consider such "singularities" and many others of the like as physically meaningful entities, when there are none in nature. See: E. Elizalde, Universe 9, 33 (2023).

[6] J. Bardeen, L.N. Cooper, J.R. Schrieffer, Phys. Rev. 106, 162 (1957).

[7] P.W. Anderson, Science 177, 343 (1972).

[8] J. Bardeen, L.N. Cooper, J.R. Schrieffer, Phys. Rev. 106, 162 (1957).

[9] J.G. Bednorz, K.A. Muller, Z. Phys. B 64 (2), 189 (1986).

[10] J. Nagamatsu et al., Nature 410, 63 (2001).

[11] .P. Drozdov et al., Nature 525, 73 (2015).

[12] I. Errea et al., Nature 532, 81 (2016).

[13] R. Hoffmann, "The same and not the same", Columbia University Press, New York, p. 18 (1995).

[14] S.S. Schweber, Phys. Today 34 (1993).

[15] J. Santamaría Antonio, "The principle of structure of ordinary matter and paradoxes in chemistry", Opening session of the academic year, Royal Academy of Sciences (2016).

[16] G. Frenking, S. Shaik (Eds.) "The Chemical Bond" Vol I, II. Wiley-VCH, Weinheim, Germany (2014).

[17] F. Dyson, Phys. Today 48 (2010).

[18] J. Butterfield, "Less is Different: Emergence and Reduction Reconciled", Found. Phys., 41, 1065 (2011).

[19] P.W. Anderson, "More and different: notes from a thoughtful curmudgeon", World Scientific p. 134 (2011).

[20] P.W. Anderson, Science 177, 343 (1972).

[21] P. Ball, "Stories of the Invisible. A guided tour of molecules", Oxford University Press, p. 163, London, (2001).

COMPARTIR